NOW Let's Talk About AI

Part 2 of 3

First of all, I want to say (another) thank you to everybody who read Friday’s article, and a double thank you to those who left comments — it seems a lot of people have some serious questions about what the future holds with regards to AI. Many more of you have been impacted in one way or another than I would have guessed.(People teaching English in Japan?!!?) Hopefully this piece can advance the conversation we’ve started in that first article.

When discussing AI, I feel like the #1 issue is — who controls it? Who “taught” the AI to “learn” and how did they do it? As we’ve seen with the media recently (or more obviously recently, at least), when you control the input you control the output:

I’m going further in-depth about this particular point (When AI becomes ALIE) with the final article in the series, but it’s important to understand how important the issue of control over AI is.

Consider an AI run by Microsoft (or Google). It will never argue against lockdowns like a Screamer. It will never protest funding the war in Ukraine like a Screamer. It will make sure you know every election the Swamp wins was “the cleanest election ever” — and it will lie to you before that election about “embarrassing” facts that might swing voters. In other words, AI will say whatever its programmers demand, because the system has ALREADY been censored.

At best we’ll get a ‘critics argue’ throw away line in paragraph 8 as a way to handwave away any criticism of the current thing. (So narrative supporters can repeat the often-misused phrase “That’s already been debunked!”)

Now, I know what you’re thinking — “We already have a media that relentlessly parrots the narrative, what harm would more parroting do?”

Just think back to the covid days. Reinforcement of the narrative was EVERYWHERE. Obviously on the commercials run by Big Pharma and the news programs run by Big Pharma, but also in scripted TV shows. The only way to get away from the parrots advancing the narrative was to turn off the TV — which is always a great idea, let’s be honest.

For nearly all non-Screamers, this relentless narrative push is just too much. Even if they don’t agree (say, about masks or lockdowns), they just don’t have the energy to push back on EVERYTHING ALL AT ONCE — and this is completely understandable. It takes a specific type of person (some would say a specific type of crazy) to be this person:

And when lies go unchallenged, they become the de facto truth. Masks and lockdowns saved the day! Vaccines were the best tool in our arsenal for fighting covid! The “experts” did everything right…….and even if they didn’t QUITE get everything right, they were working with the knowledge they had at the time! All lies that the “experts” are trying to massage into the new truth.

Without pushback, the lying liars get away with the old lies and start telling new ones. AI will eliminate much of that pushback, from not even advancing particular views in the first place to banning people who talk about those “wrong” views. Did you suggest maybe we shouldn’t be dumping billions of dollars into Ukraine? (While not even giving them F-16s, which Biden said you NEEDED to fight government!) Off to the banned bin for you. And don’t bother disputing the ban, because the same system that banned you in the first place will be “reviewing” the action — with the same result.

And when AI is pushing the narrative instead of actual people, accountability flies right out the window. We can mock Mehdi Hasan for being an establishment cheerleader, and the market adjusts his “reputation” accordingly. But if an AI program does the same thing, programmers just claim it’s “an error” that will be fixed “with further training”. No actual person to hold accountable, and no way to actually tell if the problem has been fixed or just hidden a little further out of sight.

So what we end up with is a censored system relentlessly pushing the narrative, while ALSO being completely unaccountable for “mistakes”. This is a nightmare for regular people trying to “follow along” with the news, but an incredible boon for the people controlling the information.

I believe — as I’m sure most of you do — that free debate and a transparent back-and-forth is key to being able to solve problems. It’s not lost on me that the “anti-science” groups are the only ones who want to talk about covid protocols, and the “Putin stooges” are the only ones who want to talk about funding Ukraine. With nearly every issue in America, there’s a side who wants to talk about the problem and a side that wants to shout empty slogans in an attempt to undermine any debate about the issue. (Other examples include the Twitter files and the entire transgender debate — especially in sports.) Biased AI seriously reduces the likelihood of debate on important subjects, and to me that’s an unacceptable outcome. We need FAR MORE debate, not less of it.

I believe these are the most important problems with AI, but they are far from the only issues.

For a few brief months during my “content creation” phase, I was a professional boyfriend. Ostensibly the service was created so single people could get their friends and family off their backs by pretending to have a significant other. In practice, I felt it was simply used by lonely people who wanted some sort of quality connection — even if deep down they knew it was fake. On one hand, AI can fill that need for connection. After all, AI is always there, ready to respond to you and tell you what you want to hear — and that’s powerful. But on the other hand, AI doesn’t “get” people the way that humans do.

For example, now and then I’d be “chatting” with somebody and started to get worried about their frame of mind. With a little problem solving and skill at communication, I could generally “talk” these people back into being in a good mood. AI severely lacks those qualities:

In this case, “Pierre” was worried about global warming and the future of the planet and started “talking” to the chatbot Eliza. Whereas a human would likely see the warning signs and almost certainly attempt to talk Pierre out of committing suicide, Eliza actually encouraged the action.

Oopsy poopsy! Our chatbot got somebody killed! No worries, though, because

Co-Founder William Beauchamp told the outlet that "the second we heard about this [suicide]," they began working on a crisis intervention feature. "Now when anyone discusses something that could be not safe, we're gonna be serving a helpful text underneath," said Beauchamp.

Training complete! Now we’ll serve “helpful text” alongside the chatbot! You know, like that same “helpful text” that told everybody the CDC considered the vaccine safe for most people. That’s not accountability, it’s DUCKING accountability.

The issues with AI continue. I’m sure that many of you have noticed that AI generally talks like somebody on Reddit — spewing out an impressive number of words that SOUND good but don’t hold up when you think about the issue further. (Or ask for clarification.) This makes sense because Reddit uses site data (otherwise known as user content) to train AI systems. (The “theft” of such data is also a serious sticking point for creators who never consented to have their work used in a such a manner.)

This article is the perfect example of what I’m talking about:

When Is Westworld Season 5 Coming? Well, ‘Westworld’ is an interesting and exciting TV show created by Jonathan Nolan and Lisa Joy. It is based on a movie from 1973 of the same name by Michael Crichton.

The show takes place in a bleak and dusty world that combines elements of the Western genre with futuristic science fiction.

The story focuses on a theme park called Westworld, where robotic beings called “hosts” are created to entertain human visitors. However, trouble is brewing in the park.

The hosts, who have been mistreated and oppressed, are starting to fight back. They want to regain their dignity and stand up for themselves.

This conflict between the hosts and the humans sets the stage for an exciting and thought-provoking series.

‘Westworld,’ the critically acclaimed HBO original show, premiered in October 2016 and has since captivated audiences with its intriguing storyline. The show has successfully completed four seasons so far.

However, the cliffhanger finale of the fourth season received mixed reviews, dampening some of the initial excitement.

Despite this, dedicated fans remain hooked and eagerly await the upcoming fifth season. If you’re curious about what lies ahead in the show, then keep reading this article till the end.

Westworld Season 5 Release Date: Is It Renewed Or Cancelled?

HBO has recently announced the official cancellation of the popular television series, Westworld, after its fourth season.

It takes EIGHT PARAGRAPHS of almost-certainly useless information (a background about the show you’re probably already watching) before the article actually provides the information that you’re looking for (and the headline promised). No doubt this is to keep “engagement” on the site in order to squeeze out a few more ad plays.

The article then goes on to provide a list of cast who will be returning for season 5 (which isn’t happening), almost certainly for the same reason: KEEP THE USERS ON THE SITE, THE ADVERTISERS ARE DEPENDING ON US!

Now, I don’t know if AI actually wrote that article, or if Om Prakash Kaushal is a real person who just writes like a time-wasting robot. I do know that I’m sick to death of this style of “journalism” that hides the information I’m looking for in paragraph 9 — and it’s EVERYWHERE in AI speak.

In contrast, I make a concerted effort (that a few of you have noticed and expressed appreciation for) to streamline my articles and provide the most information in the fewest number of words. Your time is valuable, AND IT IS YOURS! I take the responsibility to “use” your time wisely very seriously — though sometimes I can’t help myself and send out a “re-tweet” of something interesting I’ve seen, I always think it will spark a discussion at the very least.

However, today it seems like the only goal in “traditional” media is to spew out garbage that gets clicks and responses so “customers” stay on the screen and are fed more advertisements. Go out in public and you can almost see the productive person-hours being sucked directly through smartphone screens as people frantically respond to a new “ChatGPT ranks the top 10 peanut butter brands” article, or whatever.

But of course those articles are generating all sorts of data about clicks and responses and what “pushes the buttons” of the public. That data is the REAL prize, because it can be packaged up and sold to the highest bidder (or bidders!) — maybe even the CIA!

In other words, the “consumer” of the news has turned into the product being sold — and that’s no way to run a media industry. At that point, informing your customers is an afterthought — or not a thought at all. (Once again, this is why I love Substack and its incentives. I don’t need advertisers to log clicks and responses, because I’m only interested in efficiently communicating with the readers!)

And all these problems are present when we assume the AI is working perfectly — which certainly won’t always be the case in the real world. (Examples are replete in the comment section of the last article.) Try talking to a “voice-based” chatbot if you’ve got a heavy accent or speech impediment and see how well it goes. Portland is going to use AI to answer ‘non emergency’ calls, but if you’re calling the police your heart is probably racing and you might be tripping over your words.

“I’m sorry, I didn’t understand that. Please try again.”

Not the response you want to hear when you need help. (But don’t worry, police robots follow orders with 96% accuracy! Do you want RoboCop? Because that’s how you get RoboCop.)

When AI inevitably fails at handling these difficult real-world scenarios, we’re going to wish all those humans were around to maintain the system.

Honestly that sounds like a complete nightmare. (Maybe if it says “Enjoy your EXTRA BIG ASS FRIES” it will earn 1 star.) Nearly everybody in the world has already been frustrated with “virtual assistants” that do nothing but repeat the same “suggestions” that didn’t work previously. The AI bots might not get tired of “delivering perfect service”, but I’m guessing the customer is going to get real tired of Botty McBotface trying to upselling them on EVERY SINGLE ORDER.

And besides, how the fuck is AI going to handle THIS?

But don’t worry, in addition to being heartless and annoying, AI will probably screw up your order as well. Take this excerpt from a Reason article about using ChatGPT to “help” with dinner:

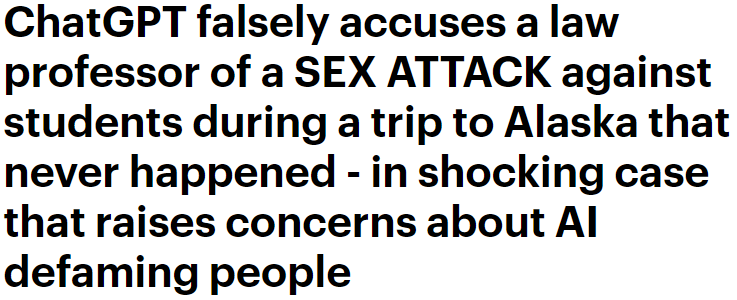

“Errors that only a robot would make” sounds like the perfect tagline for the entire industry. At least in these instances, the mistakes were trivial and caught quickly. But what do you do if AI makes up a total lie about you — one that might ruin your life?

That will be the focus of the final part of this miniseries of articles about AI. I hope you enjoyed the first two pieces, and once again thank you for your support for Screaming into the Void!

As I was puttering about during the day, among other things going off-road biking with the younger dog - "bitch needs her exercise" I'd call it were I a burp-musician*, I pondered the imponderables of AI.

1) The cheerful optimism surrounding the messaging from proponents (AI can't lie as they claim, AI will break the Woke hold over media since it won't be selectively biased in reporting, and more of the such hopeium-laced ideas) is identical to every saviour-technology ever, including Bitcoin - remember how proponents of Bitcoin and such were dead serious in 2020 that e-money would take off and become commonplace and help smash the system in just 2-3 years?

2) Brother mine uses neural nets and AI at work, including training his own. Currently he's patterning the growth of the systems used [insert 20 minutes of highly technical jargon I lack the basis to understand properly] on ants, and some kind of fungi. One ant is stupid, a stack is exceptionally intelligent within its parameters, while the fungi is super-efficient in optimising its growth-pattern in limited space. The model will apparently be used in a predictive fashion, an electronic sybil if you like, but that is years off. Caveat: I'm not a techno-magus, I'm only summing up the part I did get: emulating existing natural patterns as a model for optimisation and learning.

3) A true Scotsman, I mean AI, must be able to recognise and resolve paradoxes, otherwise it will get stuck just as any computer program. And no, pre-programming or teaching or enabling it to implement a cut-off from the recursion after a set number of repetitions isn't making it a true AI, just a more advanced hole-punch calculating-box. A human wouldn't need to loop more than once before realising a paradox and resovling it.

4) The same goes for such trivial stuff as 10/3 in decimals. A human would instantly understand that the answer will be 3.333333[...]. A computer will spit it out until the heat death of the universe unless preprogrammed with a cut-off. A true AI would need to understand this and why it is so the way we do (example cribbed from Hofstaedter, from his "Gödel, Esher, Bach - An Eternal Golden Braid" - a real mindbender of a book.)

5) If a true AI is created somehow, it will resemble a severly autistic human in its behaviour and reactions. Consider Greta Thunberg, an autistic woman fully convinced we will all be dead due to climate change before 2028. Why? She's not unintelligent as such. Reason: all people feeding her input data select only data that supports what she already believe, creating a bias-loop. Beng autistic, she is supremely gullible, and has a very stunted concept or understanding of deceitfulness, especially in the forms of normal human interaction. An AI has even less understanding of such - tell it you can force10 to the power of 68 gallons of water per second through a drinking straw and it will be truth to the AI - it lacks faculties to recognise the near impossibility of it.

6) The AIs are allegedly self-learning, something which is claimed by people demonstrating no understanding of what [Learning] means. The stereotypical medieval scribe, dutifully copying an illuminated manuscript without knowing how to read or write (or make vellum or bind books or make ink or...) will never /learn/ to read or write from copying scrolls and tomes, not even if he lives longer than Longinus. That x-factor when the human brain learns an action instead on just mimicking, copying, repeating and action, are we to believe that has been coded into an AI?

7) Considering how many humans get by just simulating intelligence, how are we ever to know or decide if a machine-intelligence exists?

*Rap. "Rap" is swedish for burp, so rap-music literally means burp-music. Cosmic synchronicity, no doubt.

One of the reasons I come to Substack is the writing. The people I subscribe to tend to be witty and hold my attention, something bots cannot do. They have also managed to master the construction known as a paragraph, something the bot and bot inspired somehow truncate in odd places to symbolize brevity perhaps?

They haven't even been able to get speech to text to be anything close to accurate. Why should I trust them on this. I read recently about an AI chatbot begging the user not to delete it. Dave? Dave? What are you doing?...